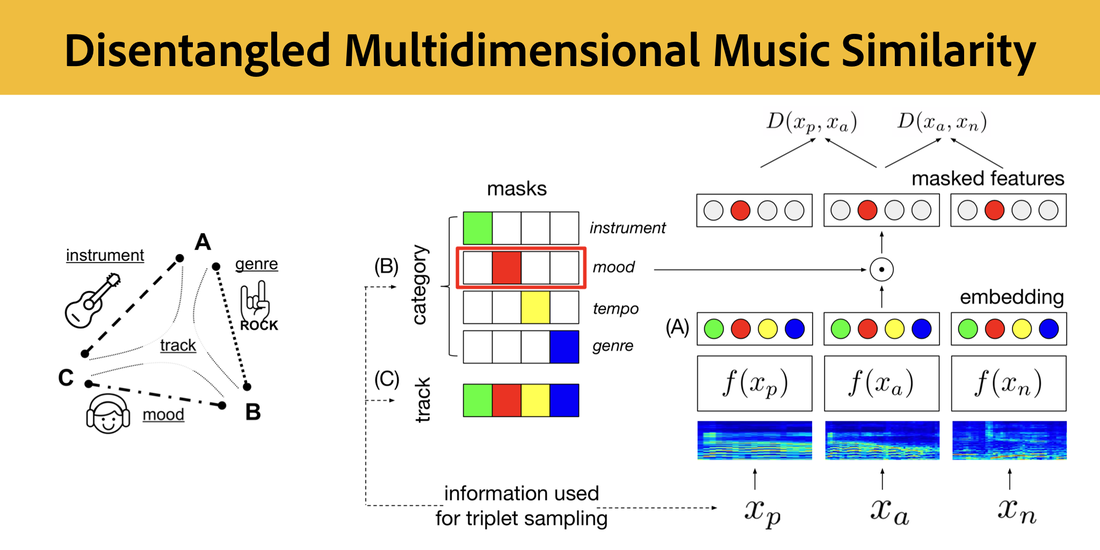

Disentangled Multidimensional Metric Learning for Music Similarity

J. Lee, N.J. Bryan, J. Salamon, Z. Jin, J. Nam

In IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Barcelona, Spain, May 2020.

[IEEE][PDF][BibTeX][Copyright]

RSS Feed

RSS Feed