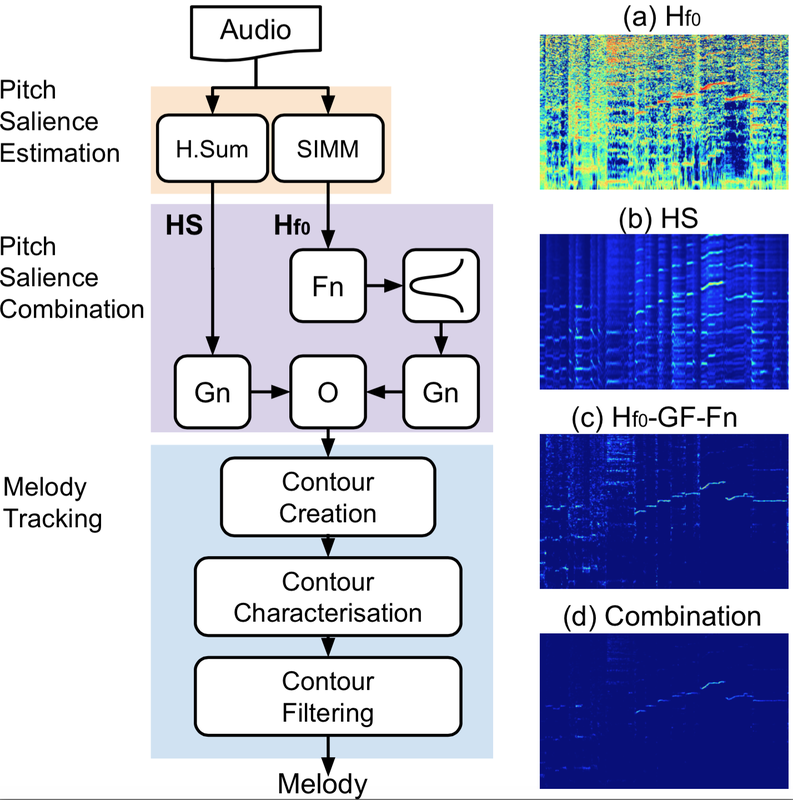

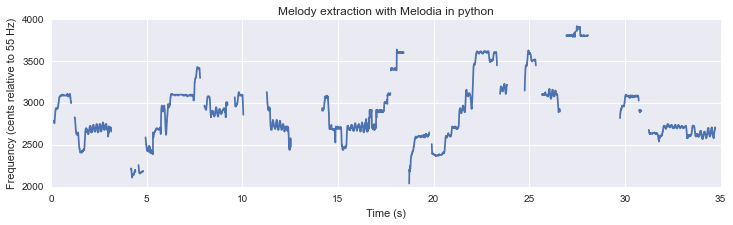

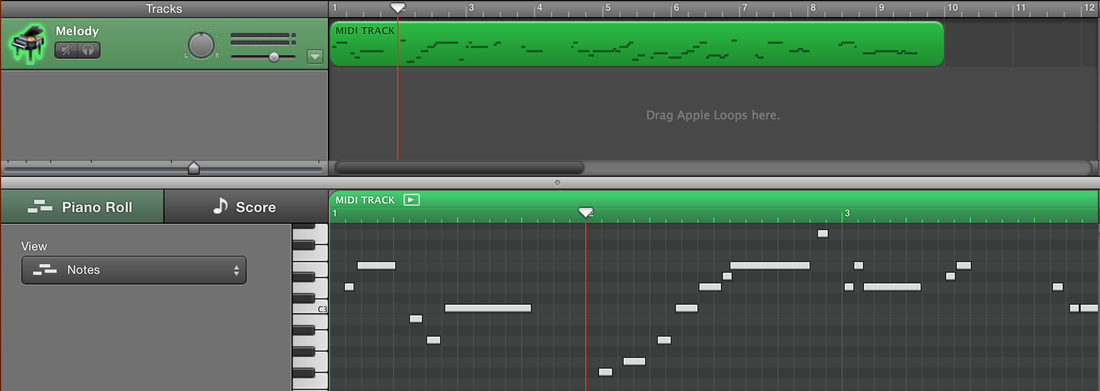

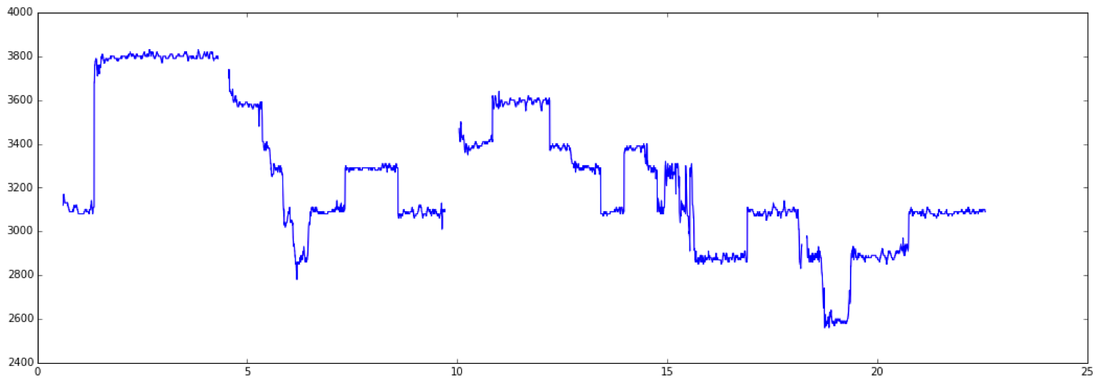

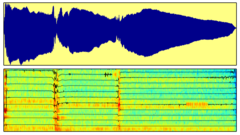

| This work explores the use of source-filter models for pitch salience estimation and their combination with different pitch tracking and voicing estimation methods for automatic melody extraction. Source-filter models are used to create a mid-level representation of pitch that implicitly incorporates timbre information. The spectrogram of a musical audio signal is modelled as the sum of the lead- ing voice (produced by human voice or pitched musical instruments) and accompaniment. The leading voice is then modelled with a Smoothed Instantaneous Mixture Model (SIMM) based on a source-filter model. The main advantage of such a pitch salience function is that it enhances the leading voice even without explicitly separating it from the rest of the signal. We show that this is beneficial for melody extraction, increasing pitch estimation accuracy and reducing octave errors in comparison with simpler pitch salience functions. The adequate combination with voicing detection techniques based on pitch contour characterisation leads to significant improvements over state- of-the-art methods, for both vocal and instrumental music. |

A Comparison of Melody Extraction Methods Based on Source-Filter Modelling

J. J. Bosch, R. M. Bittner, J. Salamon, and E. Gómez

Proc. 17th International Society for Music Information Retrieval Conference (ISMIR 2016), New York City, USA, Aug. 2016.

RSS Feed

RSS Feed