Read the full paper here:

Per-Channel Energy Normalization: Why and how

V. Lostanlen, J. Salamon, M. Cartwright, B. McFee, A. Farnsworth, S. Kelling, and J. P. Bello.

IEEE Signal Processing Letters, 26(1): 39–43, Jan. 2019.

​[IEEE][PDF][BibTeX][Copyright]

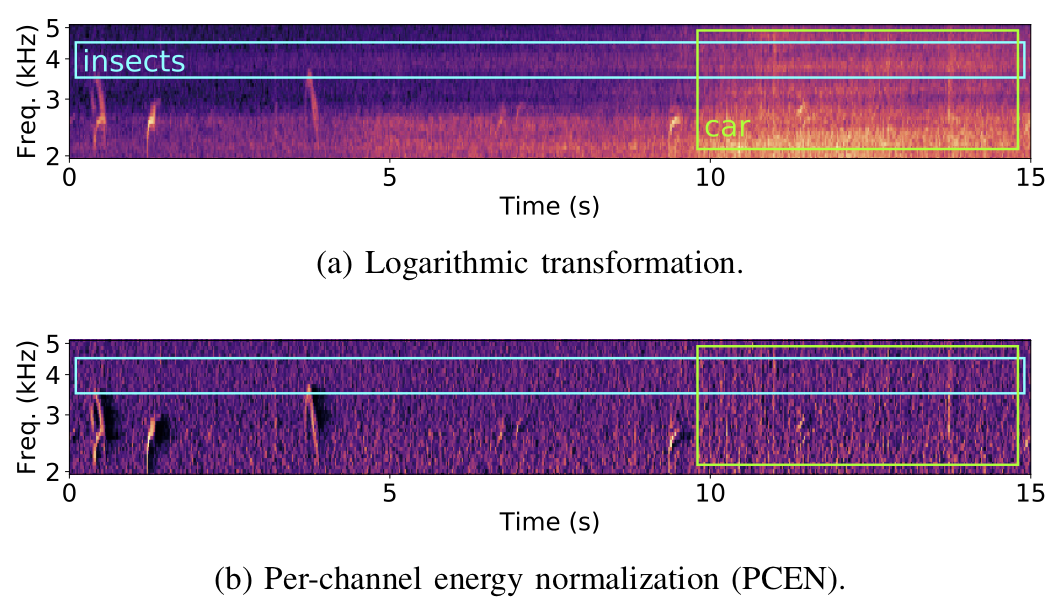

Here's a plot from our paper comparing the application of log vs PCEN on a mel-spectrogram computed from an audio recording captured by a remote acoustic sensor for avian flight call detection (as part of our BirdVox project). In the top plot (log) we clearly see energy from undesired noise sources such as insects and a car, whereas in the bottom plot (PCEN) we see these confounding factors have been attenuated, while the flight calls we wish to detect (which appear as very short chirps) are kept.

RSS Feed

RSS Feed